You might encounter a number of different error messages during your coding process. While sometimes annoying, these error messages are intended to help you identify and fix issues with your programming with helpful descriptions, fulfilling a crucial role in your debugging workflow. However, some error messages can be confusing due to their ambiguous text and a lack of information on the possible sources, like Snowflake’s <EOF> syntax error. 😕

In this article, you'll learn what an <EOF> error in Snowflake is, what happens when this error occurs in Snowflake, some possible sources of this error, and how to find and fix it.

What is the <EOF> syntax error?

An unexpected '<EOF>' syntax error in Snowflake simply means that your SQL compiler has hit an obstacle in parsing your code. Specifically, while processing said code, there was an unexpected end of file (EOF), your code deviated from the standard syntax, and the compiler posits there is something missing or incomplete. 🛑

This error can be due to any single statement in your blocks of code, and your SQL compiler will give you positional information on the flawed statement if it can. When debugging this error, you'll notice incomplete parameters, functions, or loop statements, causing the termination of your process. Generally, your compiler is programmed to expect certain sequences in code, like closing brackets and statements. When certain processes are left hanging without any more code to complete them, the compiler publishes an unexpected '<EOF>' syntax error.

Solving the '<EOF>' syntax error in Snowflake

The unexpected '<EOF>' syntax error can occur from most SQL commands run in Snowflake. The following tutorial goes through the process of creating a data set in Snowflake and explores examples of this error in SQL queries.

Prerequisites

To follow along with this article, you'll need the following:

❄️ A Snowflake account.

❄️ A local installation of SnowSQL, a CLI tool that allows Windows, macOS, and Linux users to connect their systems to their Snowflake instances.

For this article, you'll use the gearbox_data.csv file from this GitHub repository. The data set is a sample of a Kaggle project that simulated the functioning of two mechanical teeth (healthy and broken) across different load variations.

Download the data set using the following CLI command:

curl https://github.com/Soot3/snowflake_syntax_error_unexpected_eof_demo/blob/main/gearbox_data.csv --output gearbox_data.csvConnecting SnowSQL to Snowflake

This process allows you to set up communication between your CLI and your Snowflake instance. You can then use SnowSQL CLI commands to interact with Snowflake and its resources. Connect to your Snowflake instance using the following command in your CLI:

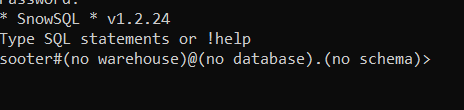

snowsql -a <account-name> -u <username>Then, input your Snowflake password to finish logging in to the Snowflake CLI. If you're using a new account, your Snowflake instance will be bare, with no Snowflake warehouse, database, or schema resources set up:

|

But don’t worry, we’ll walk through creating those resources using the relevant SnowSQL commands. 🚶♂️

Utilizing SnowSQL for Your SQL Commands

After connecting SnowSQL to your Snowflake instance, you can utilize the tool to execute the necessary queries and SQL operations. Your first task is to create a database, schema, and warehouse where you can run queries for your resources.

Create a database with the following SQL command:

create or replace database sf_errors;This creates a database named sf_errors, automatically creates a public schema, and sets the new database as the default one for your current session.

You can check the database and schema name you are using with the following SQL command:

select current_database(), current_schema();Create a warehouse, defining the resources to be used for your queries, with the following SQL command:

create or replace warehouse sf_errors_wh with

warehouse_size='X-SMALL'

auto_suspend = 180

auto_resume = true

initially_suspended=true;With the database, schema, and warehouse created, all that is needed of you is to port in data tables to utilize the resources you've established in SQL queries and commands.

You'll be using the gearbox_data.csv file you downloaded earlier. To input this data set into your Snowflake account, first create an empty table with the necessary structure and data types.

You can do so, using the downloaded data set's columns, with the following SQL command:

create or replace table sf_table (

a1 double,

a2 double,

a3 double,

a4 double,

load int,

failure int

);Your next step is to populate the empty table you created with the CSV file's data. You can do this from the SnowSQL CLI with the PUT command.

Unexpected <EOF> syntax errors and fixes

Up until this point, we've been running SQL commands that have been parsed by the SQL compiler and executed once validated. This validation process is basically the compiler running through checks based on the SQL syntax, the defined variables, and the SQL keywords used. You'll now send in a command that the compiler recognizes as incomplete according to its standard syntax.

The PUT command — as a particular syntax that also depends on the system environment the SnowSQL command — is running in (Linux/Windows). Generally, the PUT command requires the directory of the file you want to upload and the location in Snowflake where the data will be uploaded.

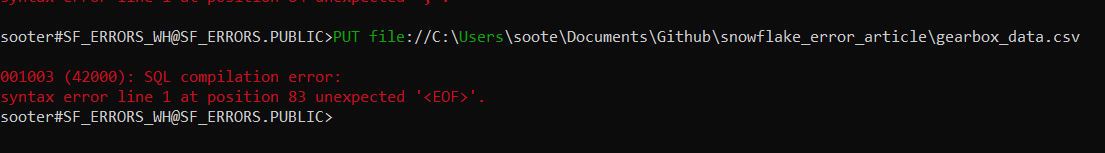

Try out this command with SnowSQL:

put file://<directory-of-your-data>

/* Example

PUT file://C:\Users\soote\Documents\Github\snowflake_error_article\gearbox_data.csv

<EOF> error |

As you can see, running this command will generate an unexpected '<EOF>' syntax error, since the compiler expects all the required parameters for the PUT command (directory of the data, directory in Snowflake) immediately after it starts parsing the command.

Note: You might need to use Ctrl + Enter rather than Enter alone to run this command, as by default, SnowSQL requires a closing ; before executing commands.

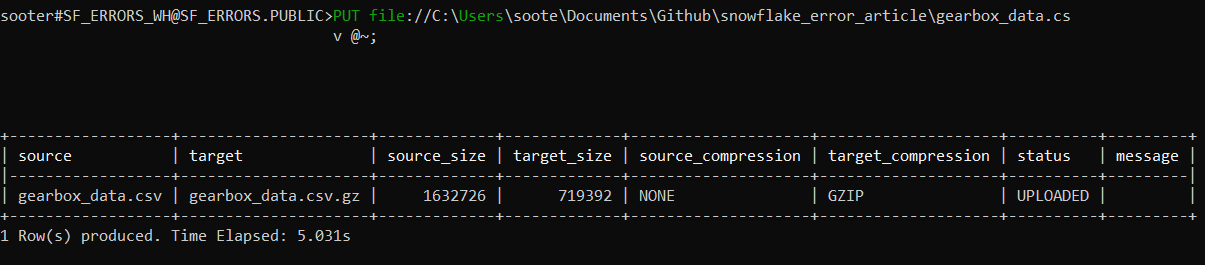

You can fix this error by adding in the missing required parameter:

put file://<directory-of-your-data> @~

/* Example

PUT file://C:\Users\soote\Documents\Github\snowflake_error_article\gearbox_data.csv @~;

|

@~ in SnowSQL points to the current Snowflake directory or internal stage the user is currently working on. Think of it as a pointer to the current working directory (i.e., pwd).

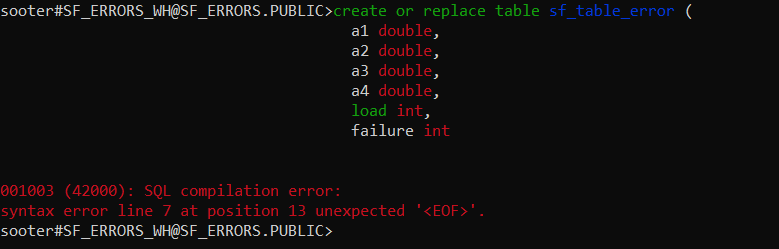

The unexpected '<EOF>' error can occur with all commands that are missing their required syntax. For instance, the earlier create table command can generate an error if the closing bracket and semicolon are left out:

create or replace table sf_table_error (

a1 double,

a2 double,

a3 double,

a4 double,

load int,

failure int

|

There are a number of ways your various commands can be left insufficient and parsed as incomplete by the SQL compiler. It's always important to confirm the syntax of the commands you are using. If you encounter an unexpected '<EOF>' syntax error, go through your syntax line by line and ensure that you've taken care of all the required elements in the command.

All commands can be affected by CTEs, stored procedures, pipes, block scripts, and even SELECT statements.

Putting your skills to the test 💻

Error messages are helpful tools for your debugging processes, as they tell you exactly what obstacles were encountered in your code and where to find them. Sometimes, they’re direct pointers to the issues that plague your system. But sometimes, they’re just confusing. 🤷

The unexpected '<EOF>' syntax error, just like any other error, helps you create the best-standardized code. At its base, this error is concerned with the syntax of your code and whether it's up to par with the programmed syntax encoded in the compiler. In this article, you learned more about the unexpected '<EOF>' syntax error, its causes, and how to debug and fix it.

📈 Want to learn more SQL tips and tricks? Check out our free SQL workshop with Ergest Xheblati, author of Minimum Viable SQL Patterns. Check it out!

👉 Want to start syncing your data from Snowflake to all your business tools? Book a demo with a Census product specialists to learn how we work for your specific operational analytics needs.