Last November, we shipped a new product: Census Embedded. It's a massive expansion of our footprint in the world of data. As I'll lay out here, it's a natural evolution of our platform in service of our mission and it's poised to help a lot of people get access to more great quality data.

First, why Census?

The original mantra of Census was “a single source of truth, synced to everything.” Put another way, our goal was to get your best data models (from customer & account profiles to event streams, transactions and orders) shared seamlessly with all your GTM tools (whether you’re an Account Executive in Salesforce, a Marketing Manager in Braze or a Financial Controller in Netsuite, you need the best possible data).

This is valuable because it means every person or team in your company can automate workflows (e.g. reach out to this account when X,Y,Z happens automatically instead of blindly reaching out every day) and ensure these workflows are using the correct, complete, and approved data.

Implicit in our vision is that cloud/SaaS continues to create tons of apps in the world (who cares about keeping 1 thing in sync 😂) and every department wants to take action in their bespoke tool.

Along the way we paved the way for your central analytics repository (whether it's Snowflake or a trusty Postgres) to be a "broker" for anyone who wants data. We introduced this hub & spoke approach in a post over 3 years ago. We built and battle-tested 200+ integrations with SaaS products. When I say "battle-tested", I mean that some of our customers sync 1B+ user records into tools that span the gamut in terms of API reliability...

Enter the rest of the world

There's a lot of value in a central broker for data. You can manage permissions in one place. You can handle PII rules in one place. You can handle data quality (correctness & completeness) in one place. This governance may seem like a tax but I believe it’s crucial for organizations to be more agile. When you can't manage all these rules centrally everything downstream gets slower and worse.

The driving force behind our growth has always been the shift towards cloud data warehouses.

With infinite storage and a flexible compute engine, cloud data warehouses made building something like Census possible. And as organizations centralize more of their data, they can now benefit from the other great feature of these platforms: separated workloads. This means it’s trivial to dedicate resources to serve a new use case without affecting any existing user. Combined with our entity caching technology, we can now help 2 constituents we'd been ignoring as a broker for data: external stakeholders and SaaS products themselves. They want access to your company's data and it’s crucial to share that central governance layer.

External consumers

What does it mean to have external consumers of your first-party data? I'm not talking about the shameless selling of consumer information but rather a secure & compliant way of accessing your valuable insights. Let’s run through some examples:

- Say you run an asset maintenance software company. You offer a SaaS solution to manage work orders, monitor asset health, and improve inventory management. Along the way you capture millions of datapoints about machines to offer proactive maintenance. Your app cannot possibly keep up with all the ways your customers want to use the generated data. They want to combine it with their ERP system. You could offer a rich export function for each tenant if only you had native integration from your central analytics repository to any tenant's business applications. Enter Census Embedded.

- Say you are an online toy retailer trying to build a retail media network. You want to display the most popular toys in a section that will get the most attention (and ideally a different section for different buying audiences). The toy-making companies want to pay for their products to appear in prominent places on the website. Doing this well means sharing some slice of your sales and audience information with your partners, so they can bid on the right space for their products (Barbie might be more desirable in one section and Ken in another). As the toy store, you'd like to offer limited access to your audience repositories and underlying advertising systems, all of which can be mediated by Census Embedded.

- Say you’re a Data-as-a-Service company that aggregates geographic activity data (e.g. which corners of the city have the most traffic jams). Your customers want to buy easy access to this data, which requires you to get the datasets organized and shared on any platform. Snowflake makes this easy enough with its data sharing marketplace but the rest of the world is left stranded. Census Embedded allows you to securely provide access to these data sets into any data warehouse *and* any business application.

Onboarding, the other side of the coin

Since the dawn of SaaS, everyone has had to solve the cold start problem. What do you do when someone signs up for your app? Some products offer an amazing single-player experience (e.g. I can start writing notes in Notion, I can start drawing in Figma). Some products are almost unusable without some data from your organization: expense management doesn't work without ingesting your org chart and a CRM/MAP doesn't work without your customer activity data.

Thus, every SaaS developer has to build some kind of solution for importing your data. This is far too costly for 99% of teams so they expose an API and leave this burden to their customers. Of course these APIs are often ill-suited to keeping large amounts of data in sync (we could write tomes about this at Census after 5 years of developing against 200+ SaaS APIs). So their best customers buy tools like Census to get this done. After all, a single CSV file import is not what users need. They need their best data models kept in sync with any app that depends on it, at any scale and as fast as possible.

|

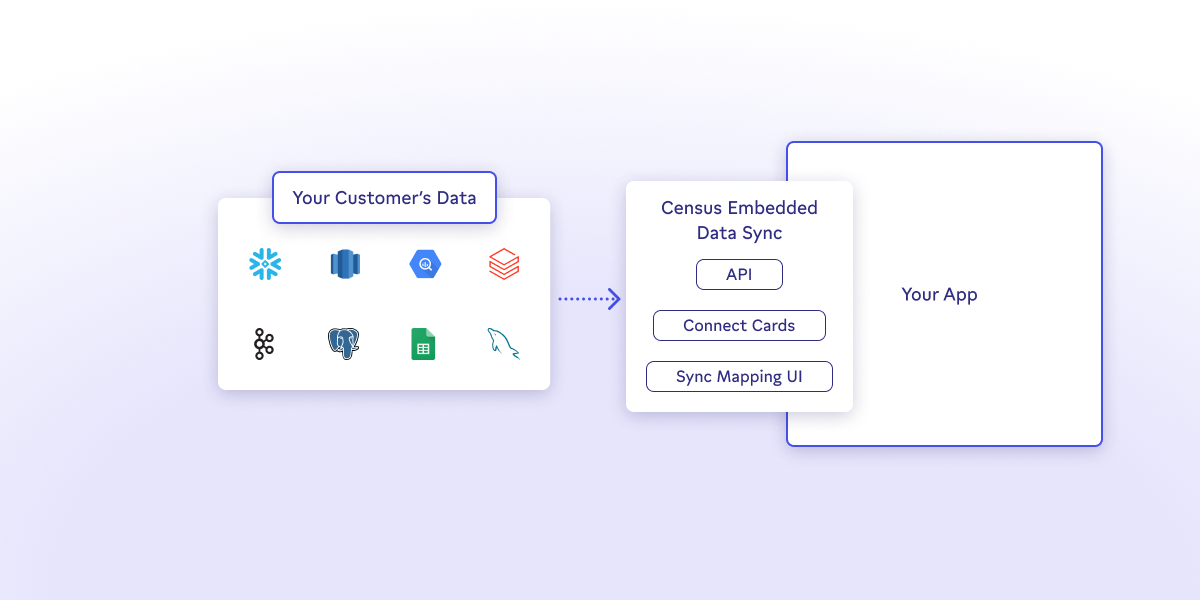

Census Embedded brings all of our rich data warehouse sync engine + data mapping technology and makes it available for a SaaS product to embed natively. This means you can use our API to offer a "sync your data" button that goes far beyond a CSV file import. It allows your users to securely connect to their data platform that stores their most accurate customer records, select how they want it mapped into your application, and you can manage the subsequent sync schedules. All of it with our world-class validations (to ensure no bad data spreads) & observability (so the data & IT organizations always know where their data is going).

The ultimate customer expectation when they sign up for an app is that their data is just there (as if there was no integration required). This has always been our goal, to make every SaaS app operate on a seamless cache of their customers' internal data platforms (warehouse, lake, or whatever comes next).

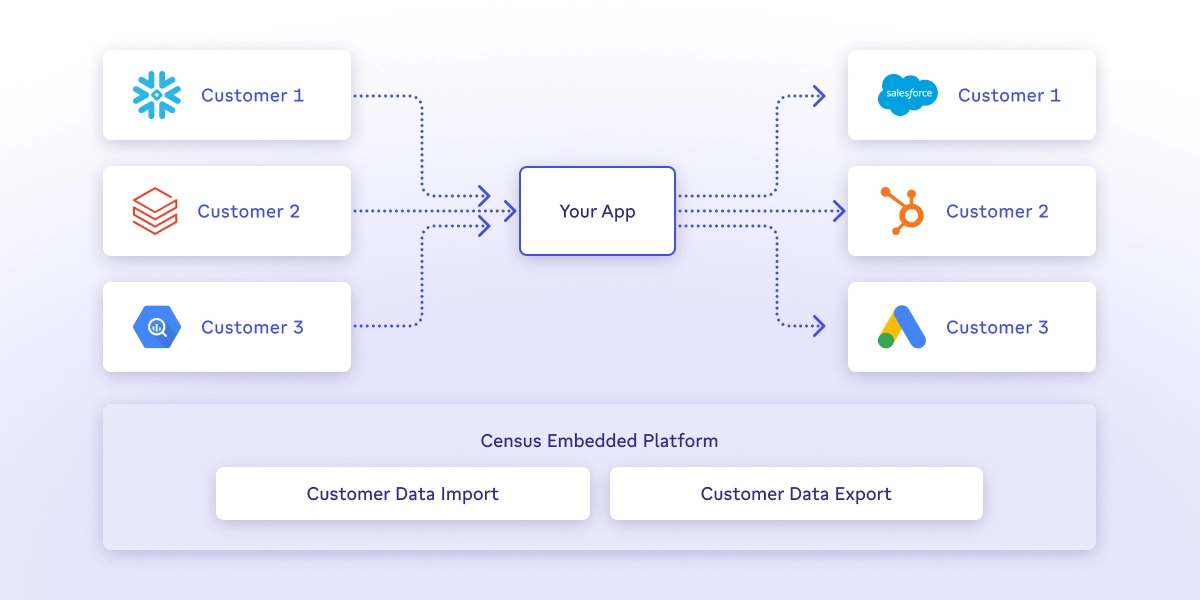

A universal platform for accessing data

|

The core of Census is its focus on delivering data you can trust without worrying about the infinite permutations of models and integrations. Inverting this sentence, Census helps anyone get access to an organization's best data in the place that they need it. With Embedded, this is now available for any external developer. Whether it's pushing insights to a partner organization, sharing analytics with a customer or synchronizing data from a customer, Census Embedded has you covered. Internally, companies often deploy Census to break down inter-department silos. Now you can break down inter-organization silos and set data free :-).

There’s a lot more to come in 2024 on this front. Look out for non-stop API improvements followed by the ability to use more embeddable UI widgets (today you already get amazing Plaid-style connect cards and we’re going to go a lot further). Our goal is to make Census Embedded trivial to integrate into your stack while giving you maximum power & observability.