At Census, we’ve had the privilege of working with large and highly sophisticated customers like Canva who communicate daily to their 125 million monthly users. Businesses with higher data maturity often prefer to model audiences in their data warehouse rather than using a point-and-click interface for several reasons:

- Data teams can maintain the warehouse as the source of truth for customer data, including critical audience definitions like exclusion lists.

- Audiences are defined in version-controlled SQL, which allows for more rigor and observability, and can be managed within existing data team workflows.

- Because audiences are defined in code, this technique scales much more easily to large userbases, high volume audiences, and very large numbers of audiences.

- Data teams are able to ensure trustworthy and approved audiences for the benefit of marketers.

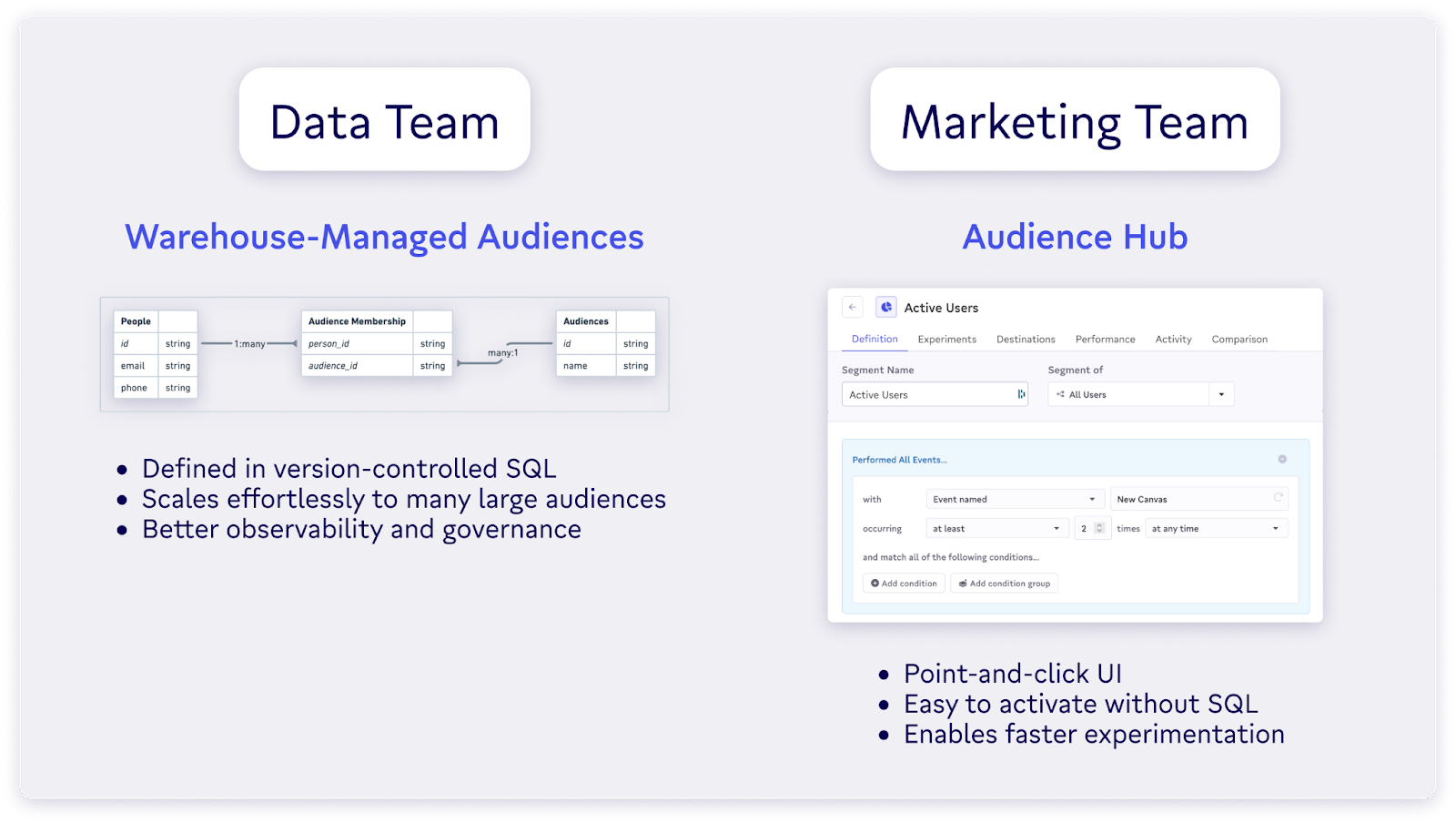

Last year, we released Census Audience Hub to cater specifically to marketers who want to use their warehouse to power customer engagement. Although it was a gamechanger to help non-technical users build complex audiences without SQL, it wasn’t designed for situations in which some (or all) audiences are already defined in the warehouse.

As a result, customers often ended up recreating their audiences in Audience Hub, rather than just mirroring the definitions directly from their warehouse.

Introducing Warehouse-Managed Audiences

Today, we’re excited to announce Warehouse-Managed Audiences. This feature allows data teams to manage audiences programmatically from their warehouse while taking full advantage of all the features that come with Audience Hub, including:

- One-click audiences

- Ad platform match rates

- Exclusion lists

- Experiments

- Performance tracking

- Audience comparisons

This approach makes it trivial to manage hundreds or thousands of audiences and still maintain the data warehouse as source of truth—a data team’s dream come true. We’re proud to be the first and only marketing platform to enable importing audiences directly from your data warehouse.

How to use Warehouse-Managed Audiences

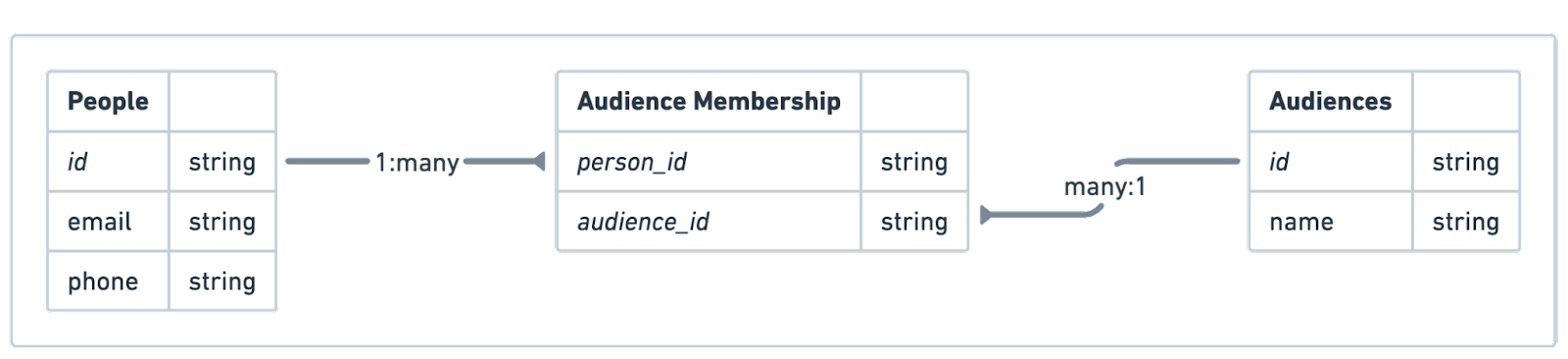

In order to support importing audiences directly from the warehouse, we’re introducing a data standard for defining audiences. This standard requires 3 distinct tables—a list of people, a list of audiences, and a join table that tells Census which people are in each audience.

|

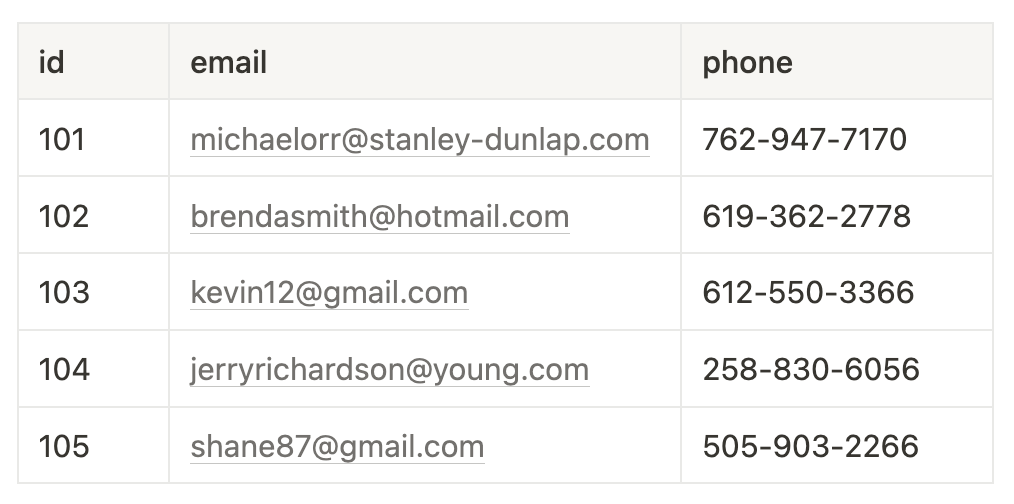

People

Also known as contacts, customers, users, etc., this is a list of all people belonging to every audience you plan to model in your warehouse.

|

Audiences

This is a list of all audiences you plan to model in your warehouse. Each audience must have a unique ID (assigned by you) and a name, which will be reflected in Census.

|

Audience Membership

This is a join table that tells Census which people are in each audience. Each row must include a unique pair of person and audience identifiers.

|

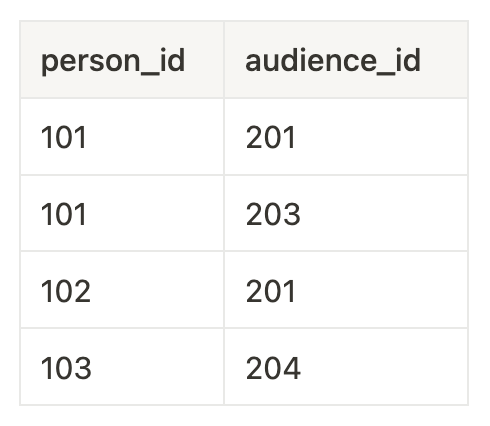

Once these tables are defined in the warehouse, you simply create 3 datasets in Census and define the relationships between them using our entity layer.

|

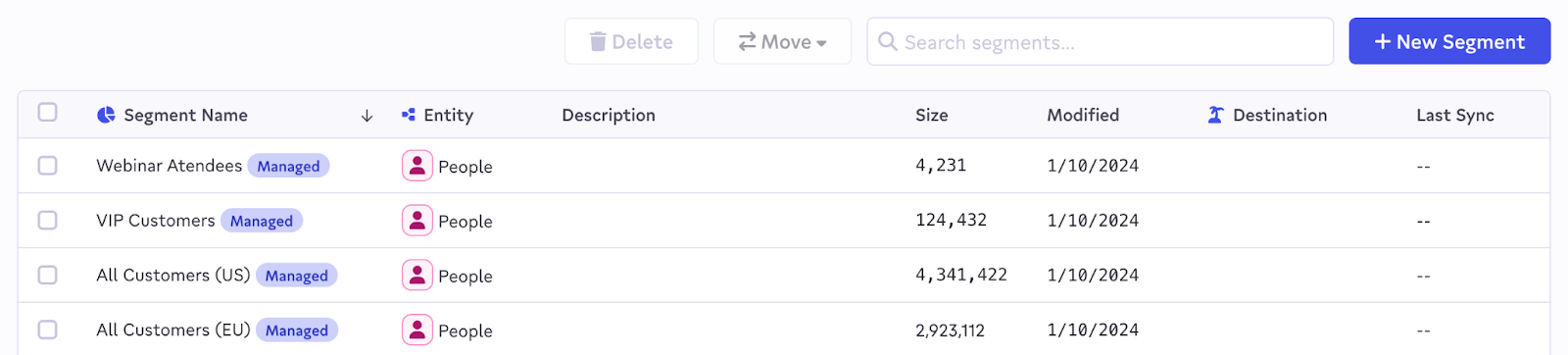

Then, you can sit back and let Census work its magic. Audiences will be automatically mirrored from your warehouse into Census and kept fresh as your source data changes over time.

|

To maintain the warehouse as source of truth, Warehouse-Managed Audiences are “Census-managed”, meaning they can’t be modified in the UI but all other Audience Hub features are available.

For more information on setup, check out our product documentation.

Can marketers still create audiences in Census?

Yes! Marketers can use our no-code Audience Hub to build audiences in Census. Those audiences may be built on top of those that are imported from the warehouse, or could represent entirely new audiences.

|

Altogether, this allows data teams to maintain control over a core collection of high-quality, vetted audiences in the warehouse, while allowing marketers the freedom to rapidly experiment with new audiences without being blocked by the data team. When an experimental audience is working well for the marketing team, the data team can incorporate it into the warehouse.

Bottom line: if you have a set of audiences that your data and marketing teams have battle-tested and you want to govern them in code, we’ve got you covered. If you need a more experimental workflow, no problem.

Get started with Warehouse-Managed Audiences today

Beginning today, Warehouse-Managed Audiences are available in private preview to all customers on our Enterprise plan. If you’re interested in checking it out, please reach out to your account manager or send an email to support@getcensus.com.